New fines in Azerbaijan: what will the laws on children’s rights, begging and artificial intelligence change?

New fines in Azerbaijan

Three separate issues have entered Azerbaijan’s legislative agenda: a new law on the protection of children, tougher fines for begging and regulation of risks linked to artificial intelligence, particularly deepfake technologies.

Together, these topics raise a broader question. Does the state intend to manage growing social and digital challenges mainly through fines and punishment? Or will authorities also develop social services and legal safeguards in parallel?

If one asks “why now?”, part of the answer relates to the country’s international image.

Another explanation concerns the domestic agenda. Annual reports by international organisations often criticise the situation of media and civil society in Azerbaijan. They also question the use of control mechanisms and sanctions. In this context, authorities justify new rules and penalties with arguments about “security” and “public order”. At the same time, critics warn about possible risks of abuse.

The regional context also matters. In 2019, Georgia adopted a parliamentary-level Code on the Rights of the Child, which UNICEF described as an important step. Armenia has had a law on children’s rights since 1996, and lawmakers have recently discussed updating the child protection system.

In other words, the issue of children’s rights is not new in the region. However, Azerbaijan’s new draft law seeks to give a modern legal framework to emerging risks, including those arising in the online environment.

Begging: do fines reduce poverty — or turn it into a “crime”?

In Azerbaijan’s public debate, begging usually appears as a combination of two different problems. One concerns poverty and social vulnerability. The other involves exploitation, especially the use of children and people with disabilities for begging.

The draft law on children’s rights gives special attention to the obligation to inform state authorities when a child needs assistance. People must also report signs of violence or exploitation. In principle, this approach could create a legal basis for social support and protection. It could apply in cases where authorities identify a child who has been forced to beg in the street.

However, once fines become the main instrument, a different risk emerges. A fine imposed on people who ask for money simply to survive may not reduce poverty. It may instead deepen it.

European experience offers two important approaches.

- Many countries and cities regulate aggressive begging separately. These rules focus on harassment of passers-by or situations linked to public safety.

- In 2021, the European Court of Human Rights ruled in the case of Lăcătuș v Switzerland. The court stressed that a general ban on begging in public spaces, combined with fines, can be disproportionate for a person in a vulnerable situation. Judges found a violation of Article 8 of the European Convention on Human Rights.

The court’s comparative analysis in that case also showed that Europe has no single model. Some countries follow a stricter approach, while others rely more on social policies.

For Azerbaijan, the key question is therefore not whether fines exist, but whom they target. If authorities focus on networks that organise begging and exploit people — especially children — punishment may have a clearer justification. If enforcement targets people who end up on the street outside any social protection system, and who have no alternatives, it may look like an attempt to solve a social problem through policing.

This debate brings the discussion back to the link between fines and social services. The new draft law on children’s rights emphasises reporting mechanisms, local commissions and a hotline. If these mechanisms do not function in practice, the likelihood that fines will prove ineffective will increase.

Artificial intelligence: deepfake risks, personal data and media freedom

Discussion of artificial intelligence in Azerbaijan has moved beyond talk of “innovation”. It is increasingly framed as an issue of information and personal security. At a session of the Milli Majlis, MP Azay Guliyev said there is a legislative gap in the area of “digital cloning” and deepfake technologies. In his view, current mechanisms cannot effectively counter these risks.

Deepfake technology can be explained simply. Artificial intelligence imitates a person’s face, voice or behaviour. It then produces fake video or audio that looks real.

The consequences may appear in three main areas:

Damage to personal reputation and blackmail, especially through the creation of fake intimate images of women.

Fraud, for example when criminals demand money using a cloned voice.

Public and political manipulation, such as false statements attributed to officials or well-known figures.

The fact that parliament is discussing these risks shows that the issue has already entered the national security agenda.

The authorities also approach the issue from a technical perspective. Tural Mammadov, deputy head of the State Service for Special Communication and Information Security, said Azerbaijan is preparing a document that will set security requirements for artificial intelligence systems. Officials will decide whether these systems can transfer data to cloud environments depending on the type of data they process and how they handle it.

He also said the authorities have created two centres with high computing capacity to support this work. This approach clearly shows the state’s priorities: data classification, protection of government data and control over digital infrastructure.

This debate can be viewed in an international context by comparing two regulatory models.

- The European Union model: unified rules and transparency requirements. The European Union’s AI Act (Regulation (EU) 2024/1689) regulates artificial intelligence according to levels of risk and introduces its provisions in stages. According to the European Commission’s official timetable, the transparency requirements of the AI Act — including disclosure and labelling mechanisms for deepfakes — will start to apply on 2 August 2026. The idea is not so much to ban the technology as to establish transparency rules that prevent people from being misled, for example by clearly indicating that “this content was generated artificially”.

- The United States model: fragmented regulation and a focus on specific harm. Instead of a single “AI law”, the United States relies on a combination of instruments. These include voluntary risk management frameworks developed by NIST, such as AI RMF 1.0, and new laws that target particular forms of harm.

For example, the United States adopted the federal Take It Down Act in 2025. The law targets the distribution of intimate images without consent, including AI-generated “intimate deepfakes”, and obliges platforms to remove such content quickly. However, the law has also faced criticism. Some voices in the US warn that it could affect freedom of expression and allow misuse of the takedown mechanism if authorities or platforms apply it incorrectly or excessively.

In Azerbaijan, there is a risk that the framework of “information security” could sometimes become an additional tool for controlling media and civil society. The threat posed by deepfakes is real. However, the language of the law and the way authorities apply it should not result in journalists, researchers and critics falling under broad interpretations such as “fake content” or “manipulation”.

Violence against children: what will the new law change?

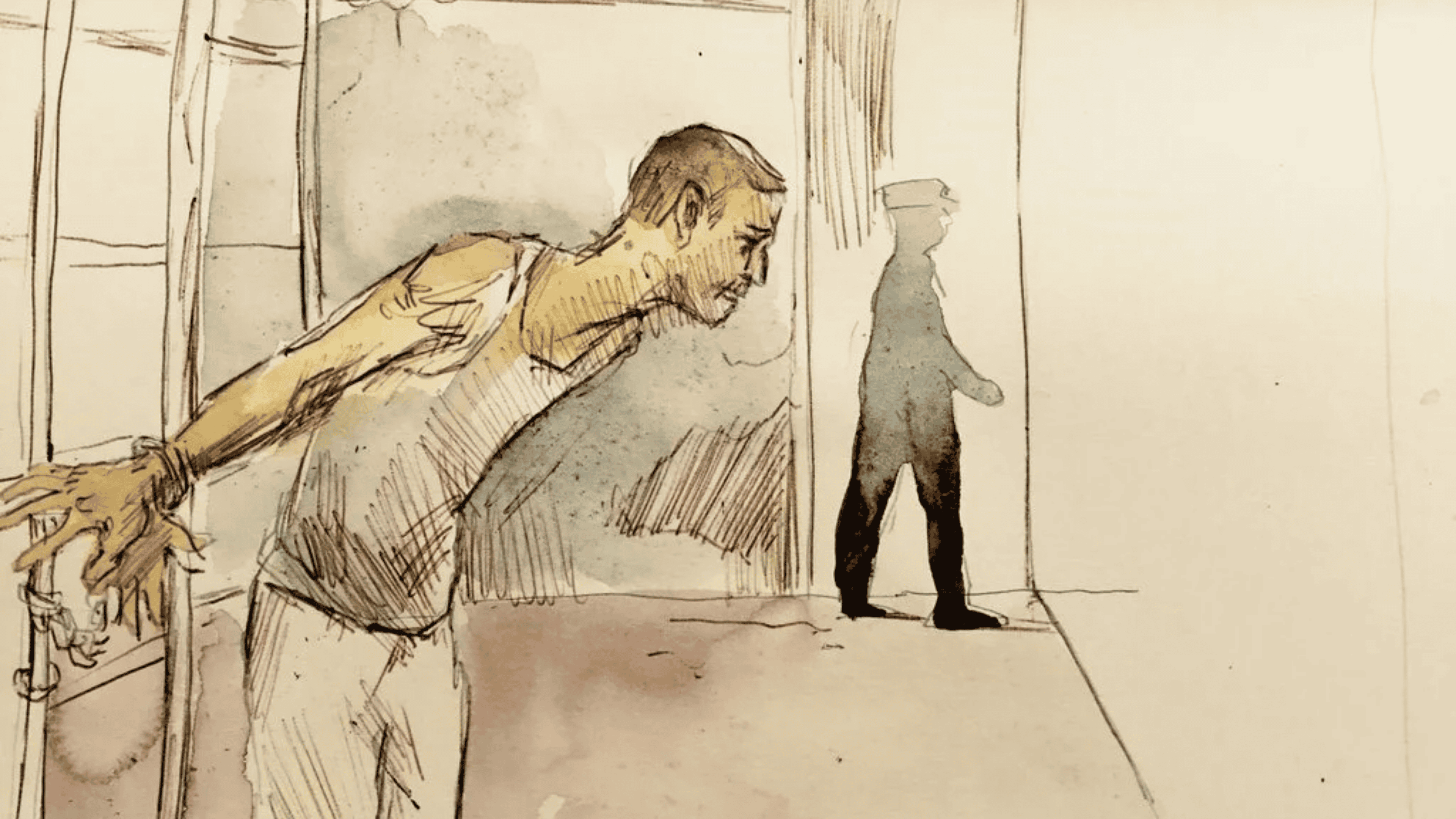

According to the official text of the draft law presented to the Milli Majlis, the concept of “violence against children” receives a broad definition. It includes any action or inaction that threatens a child’s life, health, sexual integrity, honour or dignity. It also covers acts that cause, or could cause, physical or psychological suffering.

A key innovation in the draft law concerns the digital sphere. The text states that violence can also occur “through the use of internet information resources or information and telecommunication networks”. In other words, the law no longer treats violence only as a physical event that occurs “at home” or “in school”. It also recognises online insults, harassment and other forms of digital pressure.

A second major change introduces the principle of mandatory notification. The draft states that parents, as well as staff of educational, medical and social institutions who supervise children, must report signs of violence to the relevant authorities while respecting confidentiality. The same article also mentions the option of contacting a “children’s hotline”.

The document also stresses the obligation of individuals and legal entities to report a child who requires assistance.

Media coverage describes the aim of the draft law as the creation of a unified state policy to protect children’s rights. The text also explains concepts such as early marriage and reminds readers that the law imposes liability for such actions.

UNICEF’s approach to child protection offers a useful framework for assessment. In its strategic documents, UNICEF defines child protection as a system that prevents and responds to exploitation, violence, neglect and harmful practices. The organisation emphasises that this approach rests on the Convention on the Rights of the Child.

UNICEF also stresses the need to strengthen the overall system. A law alone is not enough. Authorities must ensure coordination, services, trained personnel and functioning mechanisms. The principle of mandatory notification will be tested in this context. If a report to the police or a commission does not lead to measures such as placing the child in a safe environment, providing psychological support, working with the family and ensuring rehabilitation, the mechanism may remain only on paper.

This issue also involves a delicate balance. For part of society, the idea of “family values” implies narrower limits on intervention in matters related to child protection. Others argue for stronger safeguards for children’s rights in line with modern human rights standards. The law’s reference both to confidentiality and to the child’s right to protection from violence can be seen as an attempt to strike that balance.

One positive aspect is that Azerbaijan’s draft law broadens the legal definition of violence against children. It also explicitly recognises violence carried out through the internet. This signals that online abuse and harassment are being treated as serious problems.

The idea of mandatory reporting formally expands opportunities for early intervention. If a teacher or a doctor suspects violence, it becomes harder to say “I will not interfere”. At the same time, the development of security requirements for artificial intelligence and data processing shows that the state does not intend to leave digital transformation without oversight.

New fines in Azerbaijan